In the fast paced world of SEO, rankings are constantly fluctuating. This is part and parcel of search engine optimisation. However, if your rankings have dropped dramatically there may be a more serious issue. As we all know time is money and the longer your rankings stay hidden on page 2,3,4 of the SERPs the more money you can potentially lose.

In the fast paced world of SEO, rankings are constantly fluctuating. This is part and parcel of search engine optimisation. However, if your rankings have dropped dramatically there may be a more serious issue. As we all know time is money and the longer your rankings stay hidden on page 2,3,4 of the SERPs the more money you can potentially lose.

The good news is there are a few standard checks to quickly find out why your rankings have dropped. If you run this emergency spot check you can often quickly get to the bottom of why your rankings have collapsed. Sourcing the problem and fixing it is often a much better solution than counteracting the problem with extra link building activity. If you follow the steps below you can soon uncover any potential issues which in turn will save your rankings, not to mention your money.

Broken Links

A good place to start is to check if your site has any broken links. This can be done through Google Webmaster Tools and will quickly show up in the ‘crawl errors’ section. If there are a huge amount of pages not found (or redirected) this will cause Google to deem the site of low quality and it will start to place you lower down the rankings.

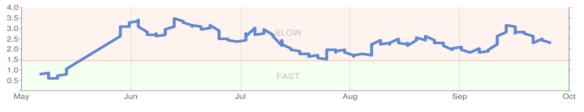

Site Speed

This is not a huge factor, but if your site speed has taken a dip this can influence your search engine rankings. If a webmaster has done some development work or changed host this can change the loading time of the site. Again, this can easily be spotted in the ‘labs’ section of Google Webmaster Tools. The tool will give you a graph of what is considered acceptable.

Duplicate Content

Google’s algorithm is extremely sensitive to duplicate content and in turn will often penalise your rankings. If you have duplicated content anywhere on the web you are risking a filter being applied to all your listings. ‘Duplicate’ includes publishing your site’s content elsewhere, having two sites, dooryway pages, duplicate Meta, similar addresses or even overusing the same content to build backlinks to your domain. One quick way to spot duplication is to use a plagiarism checker. The other option is to paste your content into Google and sport similar text.

Interlinking

In the past I have seen rankings drop due to extreme interlinking between two separate sites. This is perfectly acceptable if the two domains have separate objectives, but if you’re trying to clog up the search engines with similar domains this will often backfire. For example, it is fine for a business to have a swimming suit domain and a suncream domain and interlink once. The problem would be if the swimsuit site also had a baby swimsuit site which interlinked on every page (with keyword anchor text). This can quickly be viewed with a review of the links on your site.

Reciprocal Linking

Excessive reciprocal link schemes are a thing of the past and can often hold back your rankings. If you have a high number of reciprocal links which are sitewide and on the same IP address this will appear unnatural to search engine crawlers. To spot this you can use a variety of link analysis software.

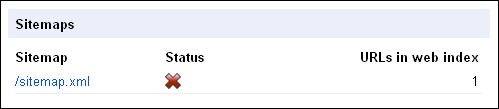

Sitemap Spot Checks

It’s worth double checking your ‘on page’ sitemap to check no pages have fallen off the index. Similarly, it is worth checking the sitemap.xml document is still being accepted in Google Webmaster Tools. This can be spotted on the main dashboard.

Robots.txt

It’s relatively easy to exclude pages by mistake in a robots.txt file. If there is a particular section of your site that is not ranking well it may be because the pages have been removed in the robots.txt file. This will take a matter of seconds to double check.

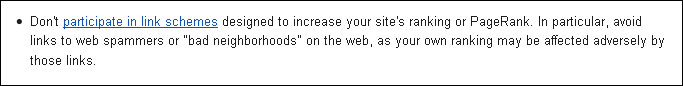

Unnatural Backlinks

Unnatural backlinks can often be the cause of a drop in rankings. Have the links been bought? Are many of the links hosted on the same IP address? Do you have multiple links coming from the same domain? These are all questions that you need to ask yourself. All of the above can be analysed with standard link analysis software. A healthy link profile is often deemed a grey area and what works for one site may not work for another. While a link buying scheme can have short term benefits the gains are never sustainable (see Google Webmaster Guidelines below).

View the Source

Simple yet effective. If your site is on a CRM it can sometimes be weeks or even months before you view the code of the site. A simple view of the source can help you check there is no hidden text or hidden links which could be causing issues. On very rare occasions I have seen sites which have been hacked and hidden links have been placed on the bottom of the page. If you have any hidden code this can have extreme detrimental effects.

Conclusion

Once you have reviewed all of the above it is worth double checking the Google Webmaster Guidelines. This will help you see if your site is doing anything else which could be deemed unacceptable for the search engine. If a range of rankings suddenly drop I would start ‘on page’ and then look at your link profile. If nothing stands out it may be that you need to reassess your link building strategy and find a new approach to creating a variety of backlinks.

Image Source

Woman with doubtful expression and question marks all over her head via BigStock

Leave a Reply