Irish Wonder explains the origins of the Robots.txt file, why it’s one of the most important SEO documents you’ll ever write, and five mistakes that can damage your site.

Irish Wonder explains the origins of the Robots.txt file, why it’s one of the most important SEO documents you’ll ever write, and five mistakes that can damage your site.

What Is a Robots.txt File?

An important, but sometimes overlooked element of onsite optimisation is the robots.txt file. This file alone, usually weighing not more than a few bytes, can be responsible for making or breaking your site’s relationship with the search engines.

Robots.txt is often found in your site’s root directory and exists to regulate the bots that crawl your site. This is where you can grant or deny permission to all or some specific search engine robots to access certain pages or your site as a whole. The standard for this file was developed in 1994 and is known as the Robots Exclusion Standard or Robots Exclusion Protocol. Detailed info about the robots.txt protocol can be found at robotstxt.org.

Standard robots.txt Rules

The “standards” of the Robots Exclusion Standard are pretty loose as there is no official ruling body of this protocol. However, the most widely used robots elements are:

– User-agent (referring to the specific bots the rules apply to)

– Disallow (referring to the site areas the bot specified by the user-agent is not supposed to crawl – sometimes “Allow” is used instead of it or in addition to it, with the opposite meaning)

Often the robots.txt file also mentions the location of the sitemap.

Most existing robots (including those belonging to the main search engines) “understand” the above elements, however not all of them respect them and abide by these rules. Sometimes, certain caveats apply, such as this one mentioned by Google here:

While Google won’t crawl or index the content of pages blocked by robots.txt, we may still index the URLs if we find them on other pages on the web. As a result, the URL of the page and, potentially, other publicly available information such as anchor text in links to the site, or the title from the Open Directory Project (www.dmoz.org), can appear in Google search results.

Interestingly, today Google is showing a new message:

“A description for this result is not available because of this site’s robots.txt – learn more. “

Which further indicates that Google can still index other pages even if they are blocked in a Robots.txt file.

File Structure

The typical structure of a robots.txt file is something like:

User-agent: *

Disallow:

Sitemap: https://www.yoursite.com/sitemap.xml

The above example means that any bot can access anything on the site and the sitemap is located at https://www.yoursite.com/sitemap.xml. The wildcard (*) means that the rule applies to all robots.

Access Rules

To set access rules for a specific robot, e.g. Googlebot, the user-agent needs to be defined accordingly:

User-agent: Googlebot

Disallow:/images/

In the above example, Googlebot is denied access to the /images/ folder of a site. Additionally, a specific rule can be set to explicitly disallow access to all files within a folder:

Disallow:/images/*

The wildcard in this case refers to all files within the folder. But robots.txt can be even more flexible and define access rules for a specific page:

Disallow:/blog/readme.txt

– or a certain filetype:

Disallow:/content/*.pdf

Similarly, if a site uses parameters in URLs and they result in pages with duplicate content you can opt out of indexing them by using a corresponding rule, something like:

Disallow: /*?*

(meaning “do not crawl any URLs with ? in them”, which is often the way parameters are included in URLs).

That’s quite an extensive set of commands with a lot of different options. No wonder, then, that often site owners and webmasters cannot get it all right, and make all kinds of (sometimes dangerous or costly) mistakes.

Common robots.txt Mistakes

Here are some typical robots.txt mistakes:

1. No robots.txt file at all

Having no robots.txt file for your site means it is completely open for any spider to crawl. If you have a simple 5-page static site with nothing to hide this may not be an issue at all, but since it’s 2012, your site is most likely running on some sort of a CMS. Unless it’s an absolutely perfect CMS (and I’m yet to see one), chances are there are indexable instances of duplicate content because of the same articles being accessible via different URLs, as well as backend bits and pieces not intended for your site visitors to see.

2. Empty robots.txt

This is just as bad as not having the robots.txt file at all. Besides the unpleasant effects mentioned above, depending on the CMS used on the site, both cases also bear a risk of URLs like this one getting indexed:

https://www.somedomain.com/srgoogle/?utm_source=google&utm_content=some%20bad%20keyword&utm_term=&utm_campaign…

This can expose your site to potentially being indexed in the context of a bad neighborhood (the actual domain name has of course been replaced but the domain where I have noticed this specific type of URLs being indexable had an empty robots.txt file)

3. Default robots.txt allowing to access everything

I am talking about the robots.txt file like this:

User-agent: *

Allow:/

Or like this:

User-agent: *

Disallow:

Just like in the previous two cases, you are leaving your site completely unprotected and there is little point in having a robots.txt file like this at all, unless, again, you are running a static 5-page site á la 1998 and there is nothing to hide on your server.

4. Your robots.txt contradicts your XML sitemap

Misleading the search engines is never a good idea. If your sitemap.xml file contains URLs explicitly blocked by your robots.txt, you are contradicting yourself. This can often happen if your robots.txt and /or sitemap.xml files are generated by different automated tools and not checked manually afterwards.

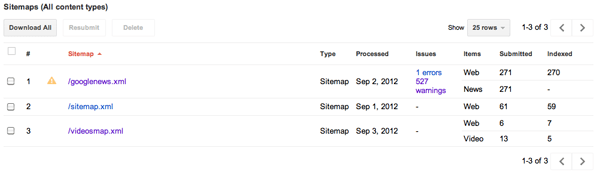

Luckily, this kind of error is relatively easy to spot using Google Webmaster Tools. If you have added your site to Google Webmaster Tools, verified it and submitted an XML sitemap for it, you can see a report on crawling the URLs submitted via the sitemap in Optimization -> Sitemaps section of GWT. Depending on how many sitemaps your site has, it can look like this:

From there, you can dig deeper into the specific sitemap with issues detected and see the examples of blocked URLs:

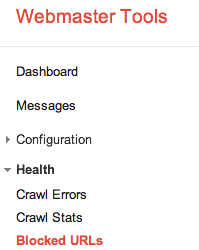

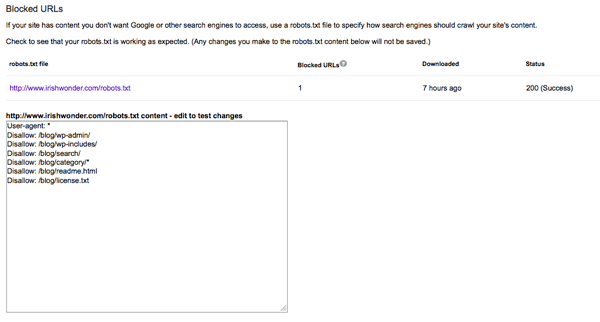

An additional word of praise for Google Webmaster Tools: there is a robots.txt testing tool inside it and this little handy tool can make webmasters’ lives so much easier. Its location is not so obvious but you can find it here:

An additional word of praise for Google Webmaster Tools: there is a robots.txt testing tool inside it and this little handy tool can make webmasters’ lives so much easier. Its location is not so obvious but you can find it here:

The beauty of this tool is in that before you apply any changes to your live robots.txt file on the server, you can test them here and see if what you want blocked will end up getting blocked and any pages you want have indexed aren’t added by accident:

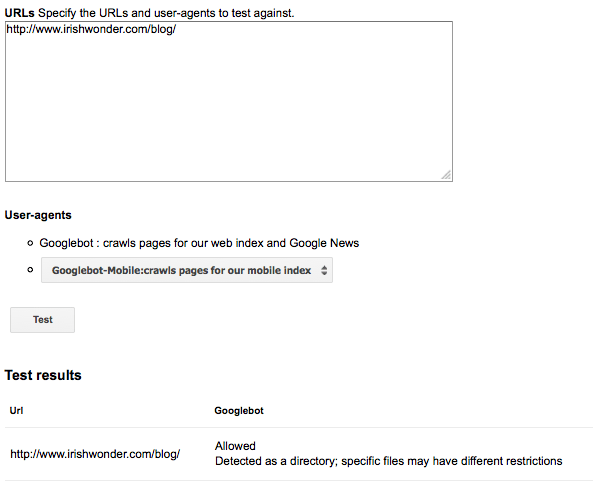

To see how the Googlebot will treat specific URLs after applying the changes, you need to enter a sample URL you want to test and press the button:

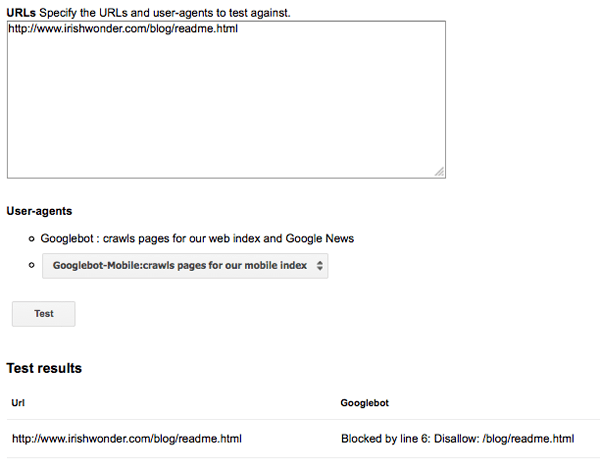

This example shows a URL that will stay accessible to the Googlebot. If a URL is going to be blocked, you will see the following message:

To me this looks like a perfect tool for learning to build proper robots.txt files.

5. Using robots.txt to block access to sensitive areas of your site

If you have any areas on your site that should not be accessible, password protect them. Do not, I repeat, DO NOT ever use robots.txt for blocking access to them. There are a number of reasons why this should never be done:

- robots.txt is a recommendation, not a mandatory set of rules;

- Rogue bots not respecting the robots protocol and humans can still access the disallowed areas;

- robots.txt itself is a publicly accessible file and everybody can see if you are trying to hide something if you place it under a disallow rule in robots.txt;

- If something needs to stay completely private do not put it online, period.

One of the favourite entertainments of the SEO community is checking Google’s robots.txt to see what new secret projects they are working on – and many times in the past people have spotted such projects that have not been released to the public via robots.txt.

Quirky Uses of Robots.txt and Fun Facts

Robots.txt is a serious element of any onsite optimization project but in the meantime, lots of fun can be had with it too:

- Some people declare their love of robots via it (Vizify.com) or send you off to watch a video of a dancing robot (Moz);

- Google has used its robots.txt over Halloween to protect itself from zombies;

- Brett Tabke has used WebmasterWorld’s robots.txt file to… run a blog in it!

- Daily Mail used their robots.txt to hire an SEO a while ago (via Malcolm Coles);

- Whyte & Mackay once ran a hidden competition in their robots.txt and those who spotted it could win some whisky (Via Malcolm Coles, who definitely likes discovering such things);

- Digg has its robots.txt file cloaked – when you access it as a human visitor all you see is this:

This post by Sebastian X has an instruction on how to cloak your robots.txt file if you’re feeling particularly paranoid – just remember that if somebody really wants to see it they can always spoof their user agent or look at Google’s cache of the file);

- Among the search engines, Yahoo has the shortest robots.txt file (4 lines only), while Google’s robots.txt is massive – 291 lines.

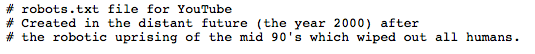

- Youtube has a reference to the Terminator plot in their robots.txt:

The views expressed in this post are those of the author so may not represent those of the Koozai team

For more information on our SEO services, please get in touch with us today.

Leave a Reply