In 2012, Google began a series of crackdowns on sites that it deemed to be manipulating search engine results using link schemes. In order to understand why we need a bit of a history lesson and 101 about how Google’s algorithm works.

One of the factors that determine where a page ranks in Google’s search results is the quantity and cumulative quality of the incoming links pointing at that page. Of course, as soon as this became evident to marketers, an era of link spam began, i.e. intentionally placing links on external websites pointing back to your own website with the intention of manipulating rankings.

This meant that many ‘low quality’ pages began ranking in high positions in the SERPs simply because they had lots of incoming links pointing at them. This was great for the people that owned the sites because they were getting tons of traffic without having to put too much work in.

For users, however, this wasn’t so great because they were being served websites that may have been low quality (e.g. irrelevant to the query, non-comprehensive content). An example might be a hastily put-together affiliate site purely generated to drive income for a short period of time with no attention paid to design, content or usability.

Google recognized that this potential wasn’t that great for them either; if users kept being served these kinds of results, they may eventually stop using Google in favour of another search engine which would have slowly eroded the brand equity Google had built up and put a dent in their ad revenues.

In light of this, Google began handing out ‘manual penalties’ in 2012; these were actions that intentionally reduced the visibility of pages or entire sites in Google if a manual review had deemed their incoming links to be intentionally placed and/or designed to manipulate rankings. See more about this in Googles Link Schemes documentation.

In short, Google wanted to ‘tidy up’ the search results, ensuring good quality sites were given the best positions instead of sites that simply had lots of links.

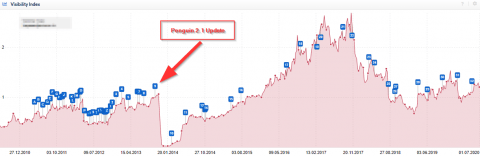

While manual penalties were handed out, Google also made an addition to their algorithm (called ‘Penguin’) which meant that unnatural link patterns could be spotted by machines. Many sites with a history of link manipulation suffered huge losses in visibility, which in turn affected traffic, conversions, and revenue. Businesses that relied heavily on organic traffic was, of course, affected the most.

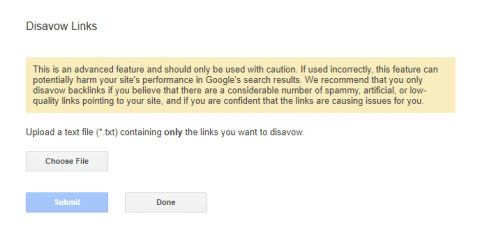

All was not lost for site owners however, soon after Penguin began rolling out and manual penalties started being issued, Google released the ‘Disavow Tool’. This allowed site owners to inform Google all the links that they didn’t want them to consider by submitting a text file.

Many sites went through a process of trying to either manually remove links or disavowing them, the submitting a ‘reconsideration request’ to Google which would be manually reviewed. If the manual reviewers deemed that significant enough action had been taken to clean up incoming links, the penalty would be lifted and pages and sites would be able to perform at their new ‘baseline’ visibility.

Some sites recovered to where they were, some sites recovered but to a point that was below their ‘pre-penalty’ baseline.

History lesson over.

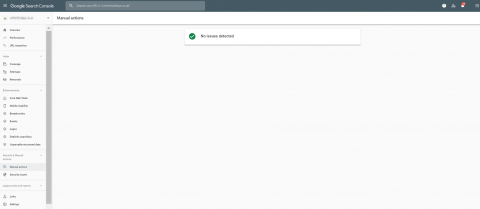

Fast forward to 2022, and almost 10 years later, there are still some reports of manual penalties being dished out (and the ‘Manual Penalties’ module remains in Search Console – perhaps to remind site owners that it’s an ever-present threat), however, the quantities are far below what they were in 2012.

The reason for this is that Google has improved its Penguin algorithm across several iterations, to a point where seemingly most manipulative links can be picked up and ignored algorithmically, meaning there’s less need for Google to manually review sites, and less need for site owners to remove or disavow because manipulative links are simply ignored rather than punished.

The question is, with the regular Google Core updates as well as all the noise associated with Covid-19, it can be tricky to spot if your site is suffering algorithmically due to the links it has pointing at it.

So what’s the solution if you suspect you have some less-than-desirable incoming links?

Do you proactively disavow them which could either avoid a potential manual penalty and/or give you a boost in visibility IF you’re currently being punished algorithmically?

OR…

Do you trust in the algorithm to ignore your manipulative links, maintain your current visibility (if all else remains equal) but risk a manual penalty?

Here are the steps I’d take;

- Export your incoming link profile using a tool such as Ahrefs or Majestic (as well as the links that Google Search Console reports) – it’s unlikely that any tool will give you ALL your backlinks, rather each one will give you a sample; to get the most comprehensive picture, combine data sources.

- Comb through these links and look for anything that Google may consider as ‘manipulative’ or ‘intentionally placed’ – including paid-for links. Try to take an objective view. An example of a manipulative link might be one with exact match anchor text (e.g. ‘cheap sunglasses’ rather than your brand name or site URL).

- Assess the ‘power’ of all the links you consider to be manipulative (Ahrefs uses ‘Domain Rating’ or ‘URL Rating’, Majestic uses ‘Citation Flow’). The higher the power of the link, the more likely it is to positively affect your visibility (or negatively affect it if you disavow it).

- Start by disavowing the ‘low power’ (e.g. links with an Ahrefs DR of 30 and below) manipulative links. Once the updated disavow has been submitted, it may take 4-6 weeks to see an effect.

- If there’s no negative effect (or a positive one), start chipping away at the higher power links, do this incrementally so you can spot what effective disavowing a link – or group of links has.

- Rinse and repeat. If there’s no negative effect, you can be fairly certain these links weren’t helping you anyway, and you’re at less risk of a manual penalty. If you see a positive effect, it means the links may have been holding you back.

- Periodically review your disavow file. There’s nothing to stop you un-disavowing links or domains. If you decide to do this, again make sure it’s an incremental process that will easily allow you to judge the effects.

Navigating Google’s guidelines around links can be tricky, and ultimately whether or not a link is ‘manipulative’ or ‘unnatural is subjective. Unfortunately, it’s Google opinion that matters, and if they manually review your site and they think you have these types of links, you could be punished for it. This is why your backlink profile should be audited on a regular basis.

Think like Google to proactively avoid this happening in the future!

Leave a Reply